What is mathematics?

On the definition of the term mathematics, there seems to be no universal agreement. This is partly because the depth and scope of mathematics has expanded over the centuries, especially with the insights into its foundations during the late 19th and early 20th centuries. It is not simply about numbers and measurement, geometry and space, algebra and calculus. These are some examples of fields of mathematics, applications of mathematical enquiry to address a particular problem domain. In any case, there is little to be gained from trying to give this word a definition. Rather, an understanding of the nature of mathematics would be more illuminating. This article attempts to provide such an insight, by showing why mathematics is such a unique discipline, how logic underpins its foundations, and how it may provide answers to the deepest questions in science and philosophy.

All Mathematics is Symbolic Logic

Bertrand Russell, 1903

In ancient times, mathematics was a useful tool for creating abstract models of the properties of, and relationships between, objects observed in the real world. The earliest example of this was the concept of quantity. Prehistoric humans recognised a certain property shared between, say, the fingers on one hand, the toes on one foot, and the petals of a geranium. Numbers were invented for communicating this concept, with the number ‘five’ being used to identify the shared property in the aforementioned scenario. Arithmetic followed, out of the necessity for calculation in matters of trade and taxation. The advent of the Babylonian sexagesimal (base 60) number system was the first positional number system, greatly simplifying and speeding up the process of calculation. Primitive forms of algebra and geometry arose in response to the increase in societal and technological complexity, especially in the disciplines of navigation, engineering and astronomy.

The development of ancient mathematics was driven by the desire to efficiently find solutions to everyday practical problems. Whether it was calculating the amount of change you should receive from a purchase, or building the Pyramids, mathematics was firmly grounded in the real world. But prior to the mathematics of the Greeks, early mathematics did not resemble the rigorous nature of the mathematics of today. These discoveries were more like mathematical ‘rules of thumb’; recipes that seemed to work effectively, because they produced correct answers time and time again, without fail. A deeper understanding of why they worked was less important. In this sense, pre-Greek mathematics was primarily based on inductive reasoning. Observing that the formula for calculating the area of a circle worked effectively in every case seen so far, the formula was accepted as universally valid. That is, it will work for any circle that may ever be conceived, now and forever, anywhere in the world.

Inductive reasoning is the basis of modern science. It relies on observation of phenomena to support a particular hypothesis. Europeans up until the end of the 17th century had come to the conclusion, via inductive reasoning, that all swans were white. This was not an unreasonable conclusion to make. All swans observed thus far by Europeans, since the beginning of history, were white. But the fragility of inductive reasoning is that one counterexample will bring the entire hypothesis crashing down instantly. This is, of course, what happened with the ‘white swan’ theory, when black swans were discovered in Australia. At best, inductive reasoning is a level of confidence, a probability, but never a certainty.

But what if you could develop a system of reasoning that ensured you could reach an unassailable conclusion? One in which you could prove a hypothesis (known in mathematics as a conjecture) to be true? And it would forever be true, and had always been true? And it would not be possible ever to disprove it? Such a system, known as deductive reasoning, was developed over time by the ancient Greeks. A system of deductive reasoning is defined by its rules of inference. In essence, a rule of inference generates a new truth from previously established truths. The previously established truths form the premises of the rule, and the newly generated truth is known as the conclusion.

To give an example, let’s examine the rule of inference known as modus ponens. This rule encapsulates a simple deduction that seems to require no justification. It is sometimes written like this: \[{ \begin{array}{l} \text{If A, then B}\\ \text{A}\\ \hline \text{B} \end{array} }\]

The statements above the line represent the premises, and the statement below the line is the conclusion. If we assert that the premises are true, then the conclusion must be true. The variables A and B can be substituted with any logical statement; a statement that must be either true or false. Let \(A\) be the statement “It is raining” and \(B\) be the statement “The grass is wet”. We then get: \[{ \begin{array}{l} \text{If it is raining, then the grass is wet}\\ \text{It is raining}\\ \hline \text{The grass is wet} \end{array} }\]

The power of deductive reasoning comes from being able to chain together a series of inferences into a deductive argument. That is, the conclusion of one inference becomes a premise of the next inference in the chain. In the next article of this series, a real deductive system known as natural deduction will be explored.

Using deductive logic as a basis, mathematics essentially becomes a sort of game. Each field of mathematics begins by declaring its initial premises, those statements that are assumed to be true without justification. These are known as the axioms of the system. Using the machinery provided by the rules of inference, we can input these axioms into chains of inference, discovering new truths along the way. These new truths become theorems of the system. This is the daily life of the working mathematician; to understand what a system is capable of by discovering theorems of that system.

One of the earliest examples of applying an axiomatic approach to mathematics was Euclid’s Elements. This text on geometry and number theory became the authoritative reference on the subject for thousands of years. All of the theorems contained within were proven, via deductive logic, from carefully crafted definitions and postulates that formed the axioms of the system. For example, in Euclid’s treatment of geometry, the basic primitives of the system, such as points, lines and planes, were defined. From these simple definitions, more complex objects such as triangles and other polygons could be constructed. Furthermore, all of the theorems of geometry, such as Pythagoras’ theorem, could be proven. This axiomatisation of geometry was taught for thousands of years, and is still taught in some schools today as a first exposure to deductive reasoning.

The development of deductive reasoning gave mathematics a unique status amongst the sciences; its theorems, once proven, could never be disputed, so long as one accepted the truth of the axioms. This is in contrast with all other sciences, whose theories may be accepted as truth for hundreds of years, only to be discarded and replaced almost overnight by new theories once new evidence comes to light.

After the fall of the Roman Empire, Europe entered a period of mathematical stagnation throughout the Dark Ages. While much of the Greek works on mathematics were lost to Europe, they were preserved by the Arabic cultures in the Middle East. During this time, deductive reasoning was pursued in the development of algebra, analytic geometry and the precursors to calculus.

During the Renaissance, the mathematics of the Islamic world slowly started gaining influence in Europe. This led to an explosion in the development of new mathematics, such as the infinitesimal calculus of Newton and Leibniz, Descartes’ analytical geometry, probability, complex numbers and much more. Despite this, there was little development and refinement of the deductive logic on which this mathematics was based until the beginning of the 19th century. Logical arguments in mathematics were, prior to this time, constructed in natural language (such as Latin or English). However, natural language is a poor basis for making precise, rigorous statements. Fraught with ambiguity and ill-defined or circular definitions, not to mention being long-winded and difficult to follow, proofs in natural language may suffer from being inconsistent or invalid in subtle ways that are difficult to detect.

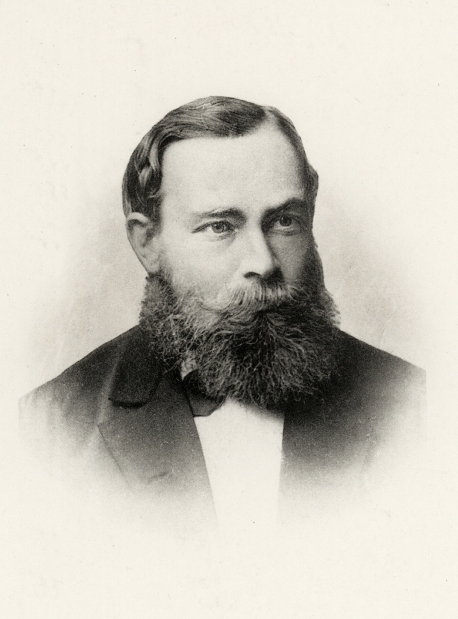

Newton (top) and Leibniz, the independent developers of differential and integral calculus

Newton (top) and Leibniz, the independent developers of differential and integral calculusSuch an issue arose after the development of infinitesimal calculus. Many criticised the concept of an infinitesimal – an infinitely small quantity – as being nonsensical. The most well-known of these criticisms was bishop George Berkeley’s dismissal of infinitesimals as “the ghosts of departed quantities”. The criticism was well-founded. The proofs within calculus at some places treated infinitesimals as small yet non-zero values, and at other places they were considered to have no value at all. How can a value be both zero and non-zero at the same time?

It wasn’t for another 150 years that calculus was placed on a rigorous foundation, by clearly defining many concepts that were rather vaguely defined or not defined at all. For example, there existed no satisfactory definition of what a function or a real number was. The work of Cauchy and Weierstrass in the 1800’s developed a solid foundation for calculus based on the rigorously-defined concepts of limit and continuity, eliminating the need for infinitesimals. But it became clear that deductive logic needed a formal, precise and concise language of its own that avoided the pitfalls of natural language. It should be noted that attempts at such a system were made prior to the modern development of logic which began in the early to mid-19th century. But mostly these systems went unnoticed, were underdeveloped, or were abandoned.

The publication of George Boole’s Mathematical Analysis of Logic in 1847 spurred the development of symbolic logic, a major step towards a formal system of logic. Boole developed an ‘algebra of logic’ by using symbolic variables and operators to manipulate logical statements. This same algebra (now known as Boolean algebra) could be applied both to a logic of sets and to a logic of propositions.

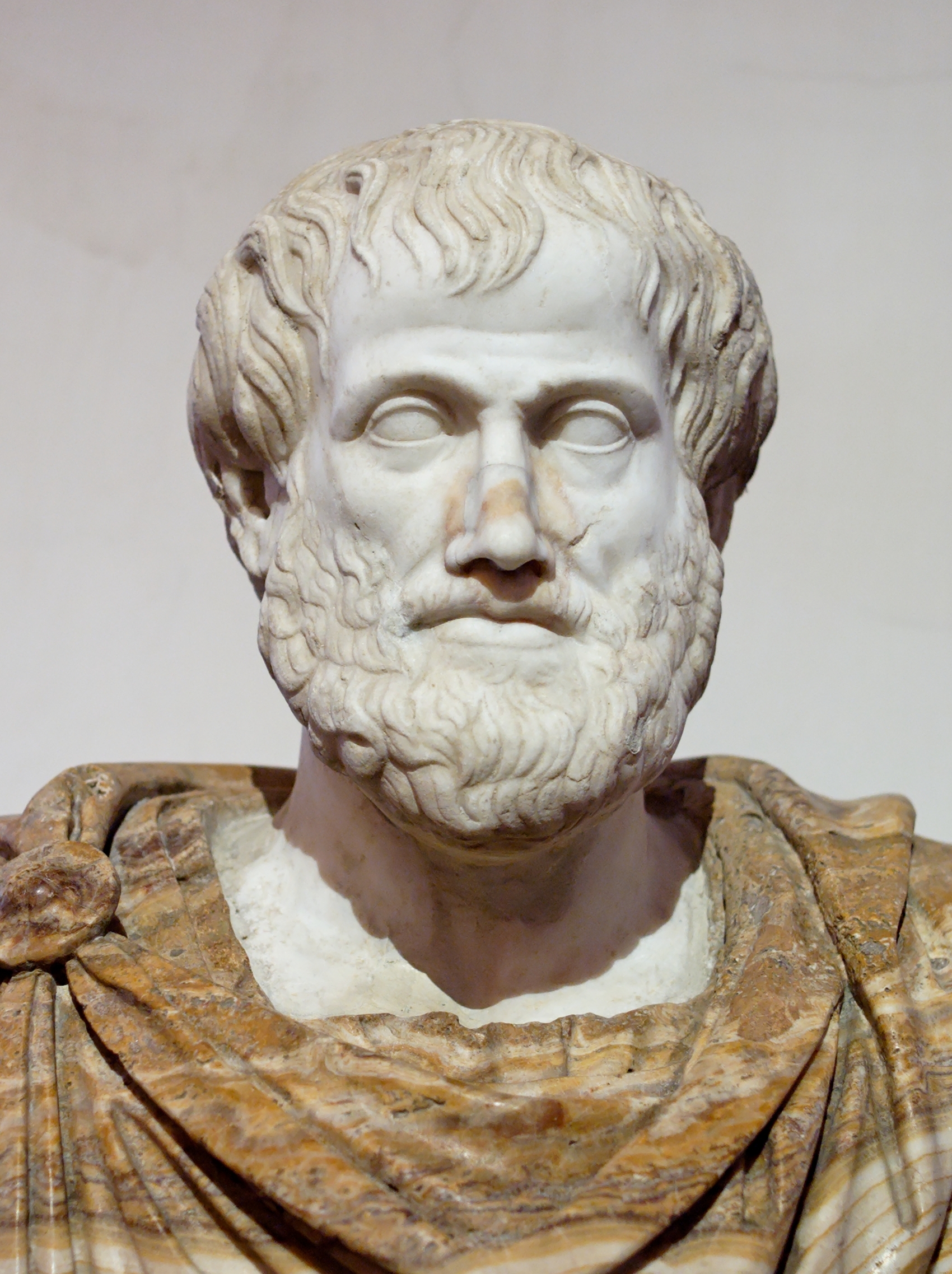

The logic of sets is a system that allows us to make logical deductions about collections of objects, known as sets. Each set contains zero or more objects, known as the elements of the set. Boole derived his logic of sets from Aristotle’s syllogistic logic. The operations on sets include the familiar operations of union, intersection and complement, and introduce the concept of the empty set (a set containing no elements) and the universe (a set containing every element).

The logic of propositions studies the truth of propositions. A proposition is a statement that is either true or false. Boole’s logic of propositions derives from the propositional logic of the Stoics. It allows us to deconstruct complex or compound propositions into simpler atomic propositions connected by logical operators. These operators include AND, OR and NOT.

To provide a concrete example, let’s show how the logic of propositions can be developed algebraically, what is today called propositional logic or the propositional calculus. This is an algebra of truth, so we only require two values: true and false. These are typically represented as the numbers 1 and 0 respectively. We then define two binary operators (operators that take two values or operands) called AND \(\land\))and OR \(\lor\)). We also define one unary (single operand) operator called NOT \(\neg\)). These operators will yield a truth value depending on the truth value of the operands. Let P and Q represent two propositions. We can define the meaning of each logical operation in the table below:

| Operation | Result |

|---|---|

| \(P \land Q\) | Yields a true value only when both P and Q are true, otherwise false. |

| \(P \lor Q\) | Yields a false value only when both P and Q are false, otherwise true. |

| \(\neg P\) | Yields the opposite truth value of P. When P is true, \(\neg P\) is false. When P is false, \(\neg P\) is true. |

This allows an algorithmic calculation of the truth of a complex proposition, such as \(P \land \neg (\neg P \lor Q)\) knowing the truth values of the component propositions P and Q. Boole presented a set of axioms in his new algebra, making it possible to prove new logical theorems from existing ones, and to demonstrate logical equivalences between propositional formulae.

| a ∨ (b ∨ c) = (a ∨ b) ∨ c | a ∧ (b ∧ c) = (a ∧ b) ∧ c | Associativity |

| a ∨ b = b ∨ a | a ∧ b = b ∧ a | Commutativity |

| a ∨ (a ∧ b) = a | a ∧ (a ∨ b) = a | Absorption |

| a ∨ 0 = a | a ∧ 1 = a | Identity |

| a ∨ (b ∧ c) = (a ∨ b) ∧ (a ∨ c) | a ∧ (b ∨ c) = (a ∧ b) ∨ (a ∧ c) | Distributivity |

| a ∨ ¬a = 1 | a ∧ ¬a = 0 | Complements |

For example, De Morgan’s law can be proven from the axioms. This law states that for any two propositions P and Q, making the statement “It is not true that P and Q are both true” is the same as saying “Either P is false or Q is false”. In algebraic form, where the \(\equiv\) symbol represents logical equivalence:

\[\neg(A \land B) \equiv \neg A \lor \neg B\]

The same algebraic structure also models the logic of sets. Rather than AND, OR and NOT, we have the analogous operations of intersection (\(\cup\)), union (\(\cap\)), and complement (~). Boole also used the number 0 to represent the empty set, and 1 to represent the universe. In modern times, the symbols \(\varnothing\) (for the empty set), and U (for the universe) are more commonly used. Note how the axioms are of exactly the same form, showing the symmetry between these two different domains of logic

| a ∪ (b ∪ c) = (a ∪ b) ∪ c | a ∩ (b ∩ c) = (a ∩ b) ∩ c | Associativity |

| a ∪ b = b ∪ a | a ∩ b = b ∩ a | Commutativity |

| a ∪ (a ∩ b) = a | a ∩ (a ∪ b) = a | Absorption |

| a ∪ ∅ = a | a ∩ U = a | Identity |

| a ∪ (b ∩ c) = (a ∪ b) ∩ (a ∪ c) | a ∩ (b ∪ c) = (a ∩ b) ∪ (a ∩ c) | Distributivity |

| a ∪ ~a = U | a ∩ ~a = ∅ | Complements |

Boole’s algebra of logic has significant application in the field of computer science. Computers are essentially an arrangement of ‘logic gates’, electrical circuitry that mimics the operations of Boolean algebra. Therefore, Boolean algebra is used in designing and simplifying such systems.

Boole demonstrated that logic can be modelled as a mathematical structure, making it a mathematical branch of study in its own right. Later, many mathematicians were considering a seemingly inverse question; is mathematics just an extension of logic? That is, can all branches of mathematics essentially be reduced to a finite number of axioms written in symbolic form? What were these axioms? And are there primitive mathematical objects that could be considered the ‘atoms’ of mathematics, from which all other complex mathematical objects could be constructed? The latter hypothesis had been supported by observations made by Richard Dedekind. More sophisticated sets of numbers, such as the rationals, reals and complex numbers, could ultimately be derived from the naturals. At the same time, Georg Cantor was constructing an axiomatic ‘theory of sets’ capable of constructing arithmetic objects from sets. Perhaps sets were the atoms of mathematics?

The mathematical school of thought known as logicism pursued the goal of reducing all mathematics to a set of logical axioms. The birth of this movement began with the publication of the Begriffsschrift in 1879, by the German mathematician Gottlob Frege. In this book, Frege outlined the details of his new system of deductive logic, which today is recognised as the first formulation of predicate logic. Frege’s system unified set logic and propositional logic into a single deductive system, by introducing predicates and quantifiers. Predicates are truth functions, whose parameters can be any element within the universe. The predicate returns a truth value that is dependant on the parameters passed to it. For example, if we define our universe U to be all people who have ever lived, we could define a predicate P as follows:

P(x,y,z) = “x’s mother is y and x’s father is z”

In essence, predicates are just parameterised propositions, templates for a logical truth that are instantiated by substituting the predicate’s parameters with elements from U.

Quantifiers are logical operators that allow us to make statements about groups of elements within the universe. The universal quantifier (\(\forall\)) allows us to assert that a logical expression is true for all elements of the universe. The existential quantifier (\(\exists\)) asserts that a logical expression is true for some (one or more) elements within the universe. For example, if the predicate A(x) is true for all elements x within the universe, and the predicate B(x) is true for at least one element within the universe, we would write:

\(\forall x(A(x))\)

\(\exists x(B(x))\)

The development of predicate logic allows the unambiguous and concise expression of statements that were troublesome to define in natural language. For example, the statement “Everyone likes someone” is ambiguous in its meaning. Does this mean that there is one person that everyone likes? Or does each person like someone uniquely, such that each person only has one object of affection? Or is it somewhere in between these two extremes? Predicate logic can make a statement more precise. Let the predicate P be defined as P(x,y) = “x likes y”. The initial proposition that everyone likes someone could be written as:

\[\forall x(\exists y(P(x,y))\]

If we want to convey the meaning that there is one person that everyone likes, we could express it thus:

\[\exists y(\forall x(P(x,y)))\]

If we wanted to convey that each person only has a single object of affection, a more complex predicate is required.

\[\forall x(\exists y(P(x,y) \land \forall z(P(x,z) \to y = z))\]

During the late 19th century, efforts were made to axiomatise natural number arithmetic. These included efforts by Hermann Grassmann, C.S Pierce, and Richard Dedekind. This culminated in the 1899 publication by the Italian mathematician Giuseppe Peano of his axioms, which became known as Peano arithmetic. This formulation is now the standard theory of natural number arithmetic (with minor modifications) in use today.

Frege’s follow up publication, the two-volume Grundgesetze der Arithmetik (Basic Laws of Arithmetic) attempted to develop its own axiomatic theory of natural number arithmetic. Unfortunately, a problem was identified with one of Frege’s axioms (Basic Law V) which led Frege’s system of logic to be inconsistent. A logical system is inconsistent when we can prove a contradiction: when a logical statement and its negation can be both shown to be true. That is, for some proposition P, both P and \(\neg P\) are true. This inconsistency was discovered by Bertrand Russell just as the Grundgesetze was being printed, and became known as Russell’s paradox. To summarise the situation, Frege’s system allowed one to construct a set based on some predicate P. In essence, it contained the following logical axiom:\[\exists y(\forall x(x \in y \iff P(x)))\]

This is read as “There exists a set y [\(\exists y\)], such that for all x [\(\forall x\)], x is an element of y [\(x \in y\)]if and only if [\(\iff\)] P(x) is true”. The predicate \(\in\) is a primitive binary predicate introduced as a non-logical symbol into the deductive system. The meaning of this operator is defined by the axioms, such that \(x \in y\) is true when the element x is contained within the set y. The logical equivalence operation \(a \iff b\) is true only when the truth values of a and b are the same.

This axiom, which has come to be known as the axiom of unrestricted comprehension, encapsulates our intuitive notion that the members of a set are defined by some property P. For example, we can conceive of a set of all ripe bananas, or the set of all words in English, or the set of all romantic relationships that have ever occurred. These sets are defined by properties that can be encoded in predicates. P may be defined as “x is a ripe banana”, “x is an English word”, and “x is romantic relationship”. But in a formal deductive system, these predicates must be constructed using only logical symbols. Russell crafted a predicate that ultimately leads to a contradiction. The predicate was:

\[P(x) = x \notin x\]

That is, x is not an element of itself. Unfortunately, substituting this predicate for P within the axiom of unrestricted comprehension, we can derive, via the axioms and rules of inference in Frege’s system, the following contradiction.

\[x \in x \iff x \notin x\]

That is, x is a member of itself if and only if x is not a member of itself. Finding an inconsistency within a deductive system essentially renders the entire system worthless. The entire foundations of logicism faced a crisis, as all axiomatic systems of arithmetic thus far either implicitly or explicitly relied on the axiom of unrestricted comprehension, including Peano arithmetic. Frege subsequently abandoned his logicist ambitions, but others worked to salvage the situation.

The contradicition was resolved by Russell with his own axiomatic system of ramified type theory, that was expounded in the three-volume Principia Mathematica (1910, 1912, 1913), co-authored with A.N. Whitehead. Russell’s paradox highlights the problem of allowing self-reference within a system. It allows one to reason about ‘the set of all sets’. Suppose such a set existed. Being a set, it should therefore be an element of itself, since it is the set of all sets. Such a notion does not make sense. Russell’s theory of types defers self-referential notions such as ‘set of all sets’ by defining a hierarchical system of types. Objects of a particular type at some level of the hierarchy may only be defined in terms of objects at lower hierarchies. So, we can define objects at a level above those of sets, called classes, where we can define a class of all sets. Note that this is now not self-referential, but doesn’t this surely just kick the can down the road? Don’t we now have to resolve the problem of ‘classes of classes’? Russell’s hierarchy of types is infinite, we simply keep defining levels one after the other as we wish, kicking the can down the road forever.

At around the same time, the German mathematician Ernst Zermelo was developing a reformulation of Cantor’s set theory that was free from Russell’s paradox. In contrast to Russell, Zermelo disallowed self-referential notions entirely by introducing an axiom of restricted comprehension, now more commonly known as the axiom schema of separation. This theory essentially rejects all self-referential notions such as the set of all sets as beyond the scope of the theory. With contributions from Abraham Fraenkel and others, this led to the development of the canonical Zermelo-Fraenkel set theory with the axiom of choice (ZFC). Being simpler than Russell’s theory of types, it has become the most well-established system of set theory in use today.

ZFC succeeds in providing an axiomatic system of set theory, where the elements of the universe are pure sets only. That is, the members of sets are sets themselves, with the simplest of these being the empty set. From this theory, all other branches of mathematics can be constructed. For example, the natural numbers can be defined purely in terms of sets. The number zero is defined to be the empty set, with all other numbers being generated by a successor function S, defined in the following way:

\[S(a) = a \cup \{a\}\]

S(a) yields the next natural number immediately after a. Starting from 0, defined as the empty set, we can construct the natural numbers.

S(1) &= 1 \cup \{1\} = \{\varnothing\} \cup \{\{\varnothing\}\} = \{\varnothing, \{\varnothing\}\} = 2 \\

S(2) &= 2 \cup \{2\} =\{\varnothing, \{\varnothing\}\} \cup \{\{\varnothing, \{\varnothing\}\}\} = \{\varnothing, \{\varnothing\}, \{\varnothing, \{\varnothing\}\}\} = 3\\ \text{…etc} \end{align*}\]

The works of Frege, Russell and many others was crucial in delivering insights into the foundations of mathematics. The mathematician David Hilbert compiled a list of the desired features to be present within a deductive system, becoming a manifesto of logicism know as Hilbert’s program. Such a system should be consistent, complete and decidable. As we have mentioned previously, a consistent system should not be able to prove that both P and its negation are true, where P is any proposition. A complete system requires that every true proposition within the system be provable within the system. All true statements should be derivable from the axioms. Finally, a decidable system possesses an algorithm that can determine the truth or falsity of any proposition P within the system.

Much of the early 20th century saw logicians beginning to investigate deductive systems mathematically. That is, developing mathematical models of deductive systems in order to study their capabilities and limitations. These included questions about their consistency, completeness and decidability. Unfortunately for Hilbert, many of the goals of his program would be shown to be unattainable. In 1931, mathematician Kurt Gödel would publish his incompleteness theorems, in which he proved that any logical system powerful enough to describe natural number arithmetic was incomplete. That is, there exists true statements within the system that cannot be proven within the system. It follows from this theorem that it is also impossible to prove the consistency of a system within that system itself. In 1949, Alfred Tarski showed that any theory of natural number arithmetic was undecidable.

This blow to the logicist program was not entirely fatal though. From Gödel’s results, the field of mathematical logic or metamathematics was born, being an active field of research to this day. This has spawned branches of investigation into the nature of deductive systems that include proof theory, model theory, set theory and recursion theory. As disastrous as Gödel’s results may seem, formal deductive systems still form the foundation of mathematics today. While a system cannot prove its own consistency, consistency proofs of a system can be constructed within a stronger system. For example, the consistency of Peano arithmetic can be proven from ZFC. While the incompleteness theorem shows it is impossible to derive every truth within the system, it is possible to formalise all forms of mathematics that are useful to anyone. In particular, ZFC has proven to be an effective theory of sets on which almost all mathematics can be constructed.

Throughout its history, mathematics has evolved from a system of inductive reasoning that stems from observation of real world phenomena to a method of deductive reasoning that allowed the construction of mathematical models transcending any known application. This trend of pure mathematics – exploring mathematical structures for their own sake, has been essential for the advancement of many fields such as science, economics and philosophy. For example, the square root of negative one is an intuitively difficult to grasp concept, because it is outside of our experience of what a number is. But the development of complex numbers, a system of algebra which allows the presence of such an object, has been an immensely useful tool within science and engineering. By experimenting with Euclid’s system of geometry, in particular removing the axiom known as the parallel postulate, we can create new non-Euclidean geometries, such as elliptic and hyperbolic geometries that are useful in navigation and cosmology. Before the 19th century, Euclidean geometry was assumed to be the only geometry. Without hyperbolic geometry, we would not have Einstein’s theory of general relativity.

The development of exotic mathematical models seems to precede a discovery for its use in many cases, demonstrating that pure mathematics is not a frivolous pursuit. Many of the deepest theories in physics are now based on mathematics that was once entirely abstract and seemingly removed from any sort of reality. Examples of this are the theorems of quantum field theory, whose concepts are so unintuitive that it is effectively impossible to explain them in a way that can be related to our lived experience. In other words, mathematics becomes the only method of rigorous communication on the frontiers of scientific discovery.

The attempt to unify quantum mechanics with general relativity has lead to the development of string theory. This is the pinnacle of unintuitive mathematics, declaring that the universe is composed of more than 10 dimensions; a far cry from our three-dimensional experience (four if you include time). Unlike the theories of quantum mechanics and general relativity, that are supported with observational evidence, string theory is an entirely pure mathematical hypothesis about the ultimate nature of reality. No evidence exists yet which supports the hypothesis, but this is because no one has yet devised an experimental process to generate this evidence. Could string theory lead to a unified theory of everything? Could the abstract world of mathematics, to some extent, be our reality. Perhaps time will yield the answers.